Tag: archaeological science

-

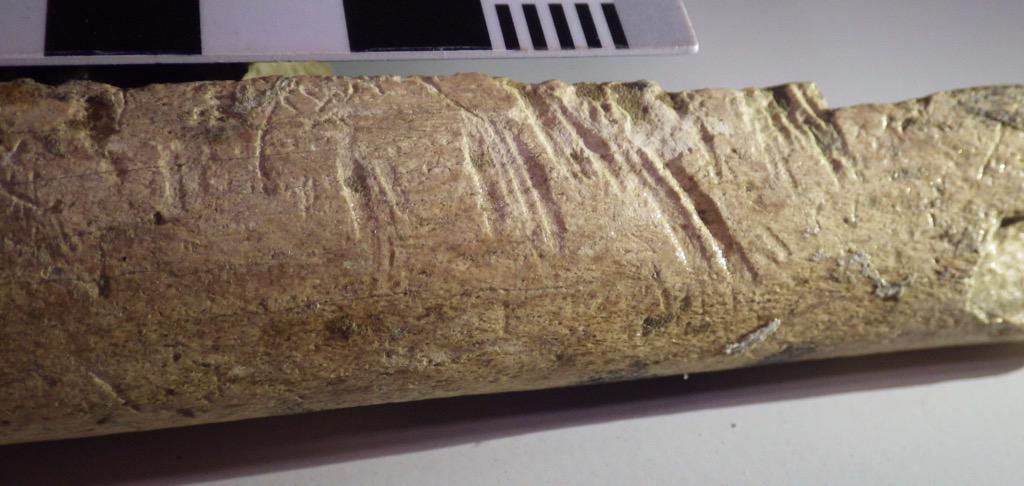

OM NOM NOM (Part Two) or Did I REALLY Use That Same Old Bad Joke To Introduce A Post on Butchery

Okay…I know I said that I wouldn’t use that extremely bad, extremely old joke to introduce a blog post…but this one is basically a companion piece to the previous OM NOM NOM post on gnawing, so it doesn’t count…I think. Well, I promise I won’t use it again after this, okay? Okay. Anyway, let’s talk…

-

“Start at the Beginning, and When You Get to the End, Stop” – The Archaeology of Time

At the time of writing this blog post, we are only three days into 2019. I’ll be honest – I’ve experienced 25 years on this planet and I still make New Year’s resolutions. The usual ones, of course: exercise more, consume less sugar, etc. And, of course, these resolutions usually make it until mid-February before…

-

The Perfect Pokemon: A Brief Look at Selective Animal Breeding

Is there a “Perfect Pokemon”? Well, I guess technically there is the genetically engineered Mewtwo…but what about “naturally occurring” Pokemon? Can Trainers “breed” them for battle? A form of “Pokemon breeding” has been a vital part of the competitive scene for years. Players took advantage of hidden stats known as “Individual Values”, or “IV’s”, which…

-

A Lesson in Taphonomy with Red Dead Redemption 2

Red Dead Redemption 2 (Rockstar Studios 2018) has only been out for a short while, but many players have been praising the level of detail that has gone into the game. One of the most striking features, at least to me as an archaeologist, is the fact that bodies actually decay over time. That’s right,…

-

On Weird Animal Bone Science, or How I’ve Become Accustomed to Watching Fish Bones Dissolve in Acid

If you’ve been reading this blog for a while, you probably have a good idea of what zooarchaeology is (and if you’re new, feel free to read that post here). But it’s not just about looking at animal bones and identifying them…well, okay, it’s a lot of that. But there’s lots more to it than…